Before we began developing our GUI we did a lot of research into existing professional end software packages used by our target demographic. Our target demographics being semi-professional to professional videographers/cinematographers. We learned/played/used non-linear video editing software (Final Cut, Adobe Premiere), compositing software (Shake, Motion, AfterEffects) and 3D animation packages (Blender, Maya). Additionally we looked into the FLAIR robot-control software package that’s used by the Milo big-rig motion-controlled robot. FLAIR turned out to little more than a spreadsheet with a ton of buttons (designed by an engineer obviously).

We took a lot of the ideas in these packages and implemented them in our own GUI. Our GUI is a web-based interface connecting to the multithreaded Python-based custom web server on the backend. The GUI makes use of HTML5 technologies (namely <canvas> tags for 2d rendering), jQuery UI for the theming/sliders-widgets, AJAX (via jQuery) and JSON (Javascript Object Notation) for transferring data between the GUI/Server/Robot/etc.

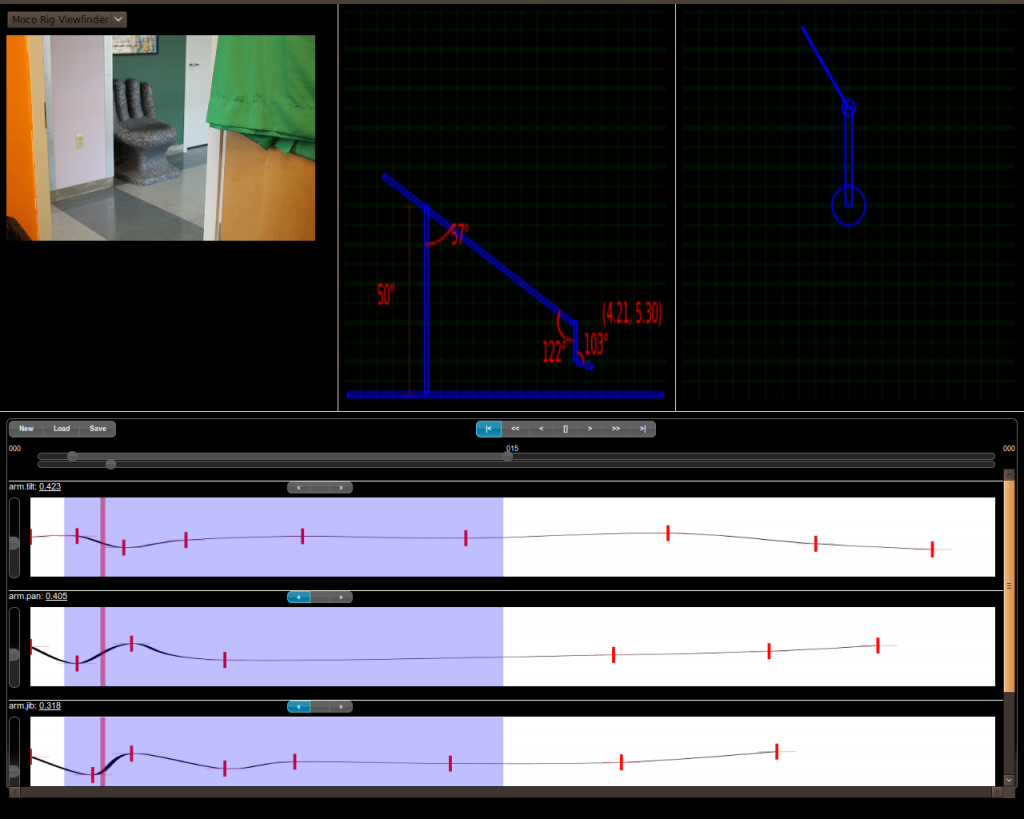

MotionBuilder GUI

Our MotionBuilder GUI is split up into 2 halves. The top half has all the outputs from the robot (the viewfinder, the skeletal model of the side profile of the robot and the skeletal model of the top profile of the robot).

The bottom half of the GUI represents the curves editor. The editor consists of a canvas and vertical-slider for each actuator/servo on our robot as well as actuators for the camera (I’ve disabled them for the time being). The canvas for each actuator is where the motion curves are drawn (remember in a previous post I mentioned that we had implemented several curves including discrete, step, linear and catmull-rom). The vertical-slider allows the user to fine-tune the placement of the keypoint at wherever the play-head currently sits.

The play head is controlled by the frame-scrubber horizontal-slider that runs the width of the GUI and lies above the actuator curves. Scrubbing across the slider will cause the play head to move to the specific frame and publishes the actuator motions back to the server for that frame. This in turn causes the subscribers of the actuator publication (ie. the skeletal models in the top half of the GUI as well as the robot itself) to move accordingly to the new actuator position.

Above the frame-scrubber is another horizontal-slider with the ability to set a frame-range by dragging the minimum and maximum handles. This allows the user to select a particular sub-range of the animation to playback.

The Playback controls are above the scrubber sliders and include a “go-to-beginning-of-range”, “fast-rewind-play”, “rewind-play”, “stop”, “forward-play”, “fast-forward-play” and “go-to-end-of-range” buttons. We use a Javascript setInterval timer to step through in the playback and it is NOT representative of the final time/delay of the animation. It’s mainly used for previewing the shot.

To the left of the playback controls we have the curve-set buttons that allow the user to clear the editor and start with a new curve set, load an existing curve set (from a file on the server) or save the current curve set (to a file on the server).

Finally we have some keyframe buttons above each actuator curve canvas. It includes a button to move the playhead to the previous keyframe on that curve, move it to the next keyframe on that curve or to toggle the existence of the keyframe at the playhead (this is how you delete keyframes).

Under the Hood

Our Web-server is a custom-written (raw socket based) multi-threaded Python server. We created a paradigm of “subscriber/publisher” streaming where any given web client can connect to the server and offer to “publish” data (via a URI) and any number of clients can connect to the server and “subscribe” to the data (via the URI). Subscribers are added to a queue for the URI and a thread is fired up for each publisher of a URI. Whenever a publisher pushes data it is broadcast to each client on their queue as fast as possible (very little buffering). This technique allows us to broadcast streams of data/images to any number of clients regardless of their intentions of the data and it affords us a great amount of flexibility and scalability for adding on more subsystems to our software. You’ll see an example of this with our compositor later.

Image streaming from the server is accomplished via the old-school Netscape “multipart/x-mixed-replace” content/mime type… it’s what those web-enabled streaming spycam/monitoring-cameras use. Most modern browsers support this mime-type (and we don’t bother to support/test IE at all).

Data streaming from the server uses the old long-polling script tag block technique (I think it’s referred to as Comet nowadays)… basically the server keeps the connection open and sends the data as JSON strings surrounded by javascript callback function enveloped in <script> tags. Most browsers execute the javascript when the ending script tag is found (it’s a throwback to old-day compatibility). So as long as the server keeps the socket open it can send all the data it likes and the client will process it one after the other.

Communication to the server (for stream-like functionality like scrubbing the sliders) is just AJAX sending JSON via HTTP GET requests repeatedly. Surprisingly the TCP handshake and HTTP request overhead aren’t too bad when hammering away at the server… especially since the biggest bottleneck would be the real world servos (the user subconsciously moves those sliders slowly when they notice the robot sluggishly getting into position).

I’m sure there’s more I’m forgetting to mention… I’ll save those for a later post