This week, Campfire continued to build our technical foundation, and took our playtesting results from last week to better brainstorm what kind of interaction we wanted to create.

Scott and Ralph challenged us at the start of the week to think about what might bring our guests “out of the experience” and strategies to bring them into it. Our initial “Wizard of Oz” prototype showed us that the Q&A approach needs a tremendous amount of recorded, scripted content, something that might be at odds with our goal of incorporating AI/machine learning.

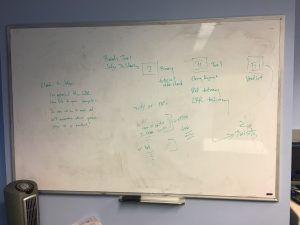

After some brainstorming about content and thinking about why we pitched this project last semester, we came away with several points. First, we wanted to create an immersive audio environment. Second, we wanted to explore how AI and machine learning could integrate into this, using the echo and Alexa. Third, the narrative could be nonfiction or fiction, and we want to focus on “customization” over “choose your own adventure.” To that end, we decided to focus our next prototype on the types of interactions that would work with our current technology progress: binary choices and a “remote controller” interaction (skip, fast forward, rewind, replay, etc). Our narrative for the next technology goal, in focusing on these interactions, includes the guest the central character as a judge, using her/his Computer (Alexa) to review evidence before delivering a bench verdict. In addition to the guest and Alexa, a scripted character will join the experience as a clerk, driving the story forward. Over the weekend, we ‘re developing an outline and script, and into the week Seth will work on incorporating these elements into the audio landscape of voice recording and SFX.

Sound Design & Research:

In early development for generating audio to support a narrative experience, we sourced sound design examples from a variety of related media.

- Examining old-time radio dramas (e.g. The Shadow, War of the Worlds, The Hitchhiker’s Guide to the Galaxy, among others) provided examples of how background music, foley, narration and character acting can be timed together and mixed to maximize engagement of their audience. Audiobook dramatizations tend to follow in this model.

- Narrative podcasts (e.g. The Moth, Serial, 99% Invisible, etc.) seemed often to focus less on the dramatic unveiling of events—thereby deemphasizing their use of sound effects. Instead, the sound design relies more heavily on ambient and background music curation to provide emotional content and connect scene to scene.

We also reviewed other audio structures and sound design passages.

- Movies, such as “The Princess Bride,” had been a tossed-around reference for a while for its creative combination of narration and character-acted storytelling. Other examples from directors like David Lynch, Terrence Malick and Sergio Leone show the ability of audio to create tension and make use of indirect control.

- Storytelling and concept-based musical albums (e.g. Harry Nilsson’s “The Point“, The Flaming Lips’ “Yoshimi Battles the Pink Robots“, and others) show how to create a musical moment within the story, should that be a path we choose to take.

- Selections from ambient recording artists, like Aphex Twin, provided inspiration of the kinds of music that can support, but not overpower, voice recordings.

- Finally, we began reviewing sound design elements from sound libraries: Sonniss and Spitfire Audio.

Technology:

For the technology side, Roy worked on testing different features of IBM Watson to make a chatbot, using the AlchemyLanguage, Natural Language Classifier and Retrieve and Rank APIs. He tested out the possibility of using them in our own AI later on, which helps for classification of user intent and retrieving information to pass on to them. Roy and Phan started implementing the front-end side of a basic Alexa Skill prototype, for setting up basic interactions for our next prototype using Alexa (the judge narrative). In addition, Phan spent time testing the AI’s ability to respond to binary questions, focusing on how it would perform answering vague or incomplete phrases, as well as setting up our project’s work on the ETC server from his initial setup on the Heroku. Phan’s development into the Alexa framework (how to communicate with Alexa) and the basic framework for the story tool will allow Seth and Sarabeth to further implement the design and interaction of the narrative prototypes in the coming weeks.

Next Week:

In addition to work on the next prototype, Seth created a draft of our branding materials, including logo and poster design. We took our team picture, featured above, (with photoshop assistance from Yen). We also skyped with our classmates in SV (Sweet Talk) who are working on a VR experience using the Vive and Alexa voice commands.

Looking ahead, the team will continue to work towards our next prototype goal in anticipation of 1/4 Review, finish up branding work, and schedule a Skype conference with Rich Hilleman (Amazon) to discuss our progress to date.