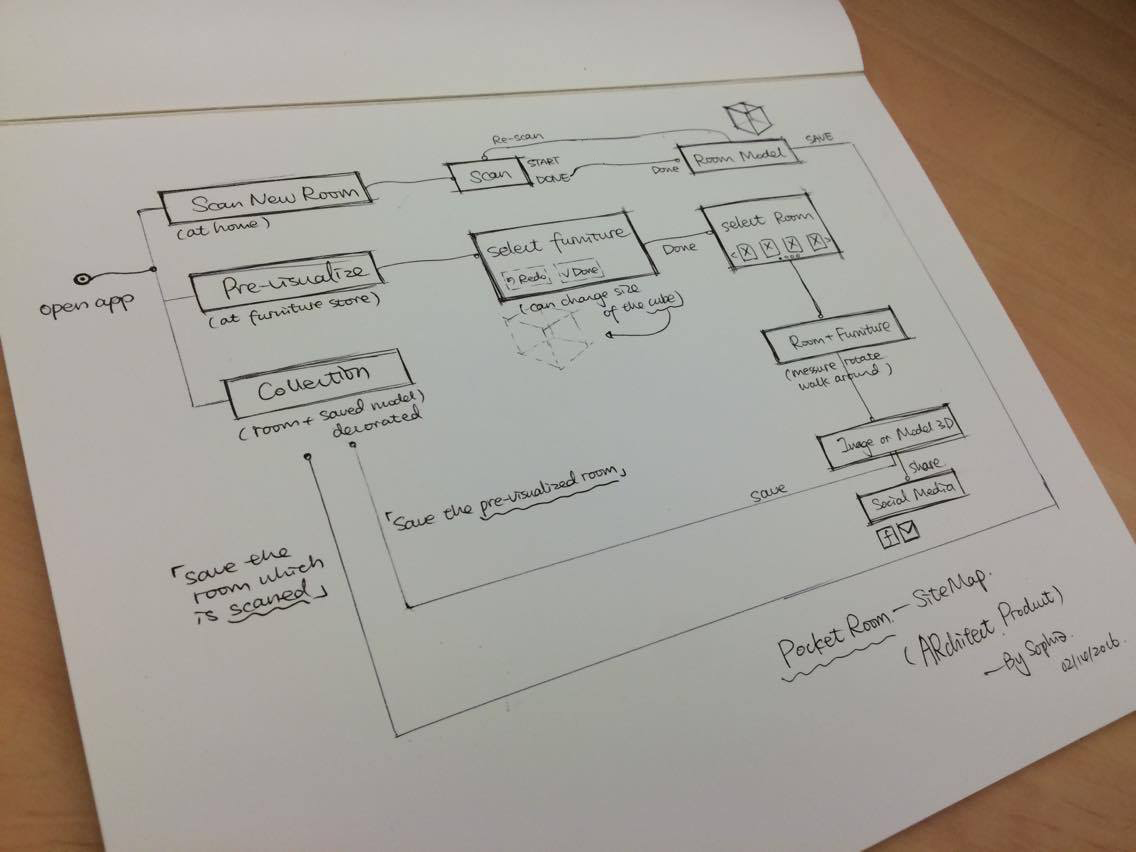

At the beginning of the week we settled on our user experience flow. Our user experience includes two parts: scanning and pre-visualization. For scanning mode, users will hold the tablet with structure sensor to scan a room and save the scanned model into gallery. For pre-visualization mode, users need to select a furniture in camera feed first and then load a virtual room into real environment. Next step is adjust the virtual room and walk around to observe. When users find a good point of view, they can take a screenshot and save it.

Based on the user experience flow, we decided to work on the following main features:

- Map the virtual room in real world (Jack)

- Keep the scale of the virtual room as same as the real room

- Land the virtual room onto the real world floor

- Walk around to observe the real room (Qing)

- Activate the positional tracking function so users can walk around inside the virtual room to observe

- select real furniture with camera feed

We want the real-time camera feed overlayed on top of a scanned model. The camera feed should only display the furniture. We thought of few different approaches for implementing this.

- Approach #1 : bounding box of camera feed (Atit)

- We want to ask people to place a bounding box which contain the furniture, and only render the bounding box as the camera feed, and outside of bounding box will be the scanned room.

- Approach #2: bounding box + realtime scanning + culling outside of the shape of furniture

- The idea is quite same as the first one, but we want to use bounding box to do realtime scanning, so that we will get the mesh of furniture, once we get the furniture geometry, we get the projection of furniture to get the shape of it, then we render its shape with the shader which is feed from camera, so it should make furniture look like jump out in the scanned room (just like the previz video)

- Approach #3: Let people prescan the furniture, then pull it as the model into the room you already scanned.

We contacted Occipital for suggestions and they were very generous to give us valuable feedback. We scheduled a meet up next week so we can easier exchange our spatial concepts.

Currently we already completed the save and reload function for the scanned room. And we almost finish mapping the scanned room with the same scale of the real world. Now we are trying to solving how to compose the real furniture from camera feed in the scanned room. Overall, we started digging a hole and trying our best to achieve further technical breakthrough.