While I was in Bat-teK last semester someone had mentioned that there was a package called OpenCV that could do facial tracking. So on a whim I downloaded it and looked through the API and example code. In particular there was one example of using the Python binding with facial tracking.

Side Note: Installing OpenCV was a pain in the butt since Ubuntu already came with the 1.0.* package in the repository and I couldn’t for the life of me get OpenCV 2.0’s Python modules working correctly. So I just used the old module interface since OpenCV2.0 still has it supported anyway (for now). I have a thing or ten to say about the OpenCV API but I’ll bite my tongue since children may be reading this blog.

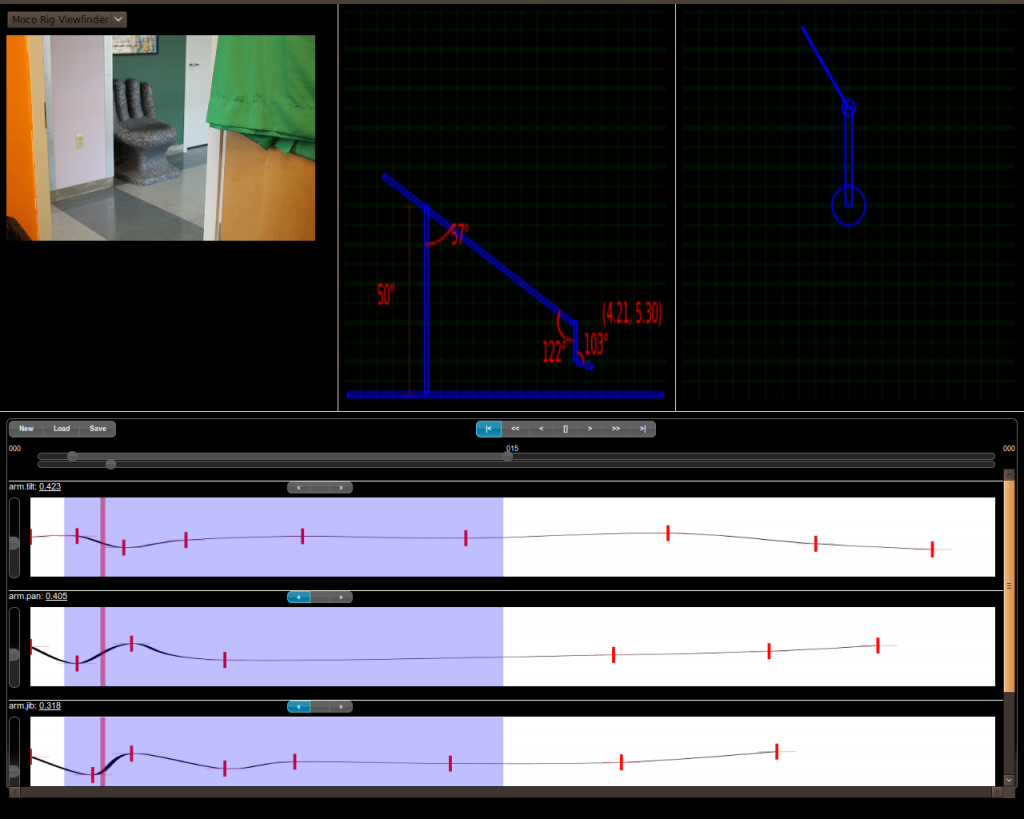

Anyways, MocoFacialTracking is a separate standalone program that connects to the MocoServer to do passive facial tracking and actuator movements. I ripped out the important bits from the facial tracking example and wrote a viewfinder Subscriber that pulls in the live video stream from the camera. Then it applies the OpenCV facial tracking algorithm (which probabilistically returns a bounding square for each of the human faces it finds on the image using the training set it comes with)… I then pick the biggest one of these bounding squares, find the midpoint of the square and then the vector from the middle of the frame (this determines the speed in which to move to bring the face to the center of the frame). After that I publish new actuator movements to the server which in turn move the pan/tilt head accordingly to bring the face midpoint to the frame midpoint. Since there isn’t a sure fire way to know how far to move the servos to center the face (you’d have to figure out the distance of the face to the camera and the field of view at that distance to figure out the frame edges to interpolate the pan/tilt absolutely)… instead I just move the pan/tilt servos a very tiny amount (multiplied by the distance to center vector I calculated earlier for an extra umpf and to slow down if it’s close). But remember that the image is being streamed continuously and the facial tracking is being done on every frame it can get. This means the facial tracking is continuously running and adjusting the servos incrementally. In the long run it comes off as smooth (albeit slow) movement to center the face in the frame. The biggest issue was the facial tracking bottleneck which can back up the image stream (and also the server on the other end)… to solve this I made the viewfinder subscriber a thread that rips through the incoming stream as fast as possible updating the latest image internally while another thread does the facial tracking and actuator publications. So whenever the algorithm gets done from an iteration it’ll have the latest image to process for the next iteration. Quick somebody implement this in GLSL 😛

So now you ask, “Great you’ve got primitive facial tracking which moves the pan/tilt to keep a person’s face in the center of the frame? WHY?”. Well I’m glad you asked… just imagine if you will a use case where a news reporter is on the field without a camera guy. Another good example would be if for a very long timeframe you want to track a certain feature in the world but you don’t know for certain the direction it will travel in (ie. a plant growing). The last thing you want is to come back a few days later and find out the plant grew out of the frame before it bloomed and thus ruining your whole shoot. Even though we’re doing facial tracking, the OpenCV algorithm is really “feature tracking” so given a good enough training set it should theoretically be able to track other features within a frame and thus realign the camera to follow it.

TODO: I’ll never get to it this semester but if we were to flesh out the TV news reporter scenario I’d love to add some heuristics to the MocoFacialTracker such that it uses some simple composition rules (like rule of 3rds, horizon line etc). Also if a second face shows up in the frame maybe it can adjust to doing a two-shot automatically (especially if the faces are pointed at each other as in conversation (ie. a news reporter interviewing a witness)… I think PittPat might have the facial tracking we’d need for face direction). I’d also love to track the handheld microphone the news reporter uses to use it as a cue to the algorithm as to where it should be focusing its attention. For example if the news reporter says something into the mic and then shifts it to the witness to speak, the algorithm would know to follow and focus on the witness til the mic is brought back. Just little things like that which a human camera guy would instinctively do and aren’t too computationally heavy or AI-y would be great things to add.