Hi again! This week, we start gearing up for Halves, towards a common understanding of what our product can do and what the UI should be able to display.

We read several papers related to our current goal of aggregating videos and mapping to player defined labels. Here’s a summary of the papers and how they can be relevant:

1. Trend Detection in Social Data, Hendrickson et. al.

The paper discussed mathematical models of detecting trending hash-tags in Twitter posts. We can adopt some of the models discussed in this paper to detect trending terms for Star Wars Battlefront 2 (SWBF2) related tweets. However, given the limited amount of data available for that, the model might be inaccurate.

2. Learning to Hash-tag Videos with Tag2Vec, Singh et. al.

The author described how he was able to train a neural network to learn hash-tag embeddings in a vector space based on a set of training videos and hash-tags. Then, using Improved Dense Trajectory (IDT) features, the author was able to analyze videos and map the resulting fisher vectors to the tag vectors. This allows us to analyze new videos and map them to an already established tag space. However, the lack of data makes it difficult for us to train the model.

3. Few Example Video Event Retrieval using Tag Propagation, Mazloom et. al.

Here, the neighbor-voting using concept vector features is used to predict possible events (tags) in new videos. Hence, no training data is needed to construct the model to predict the content of new videos. However, the reported accuracy of prediction is still generally low.

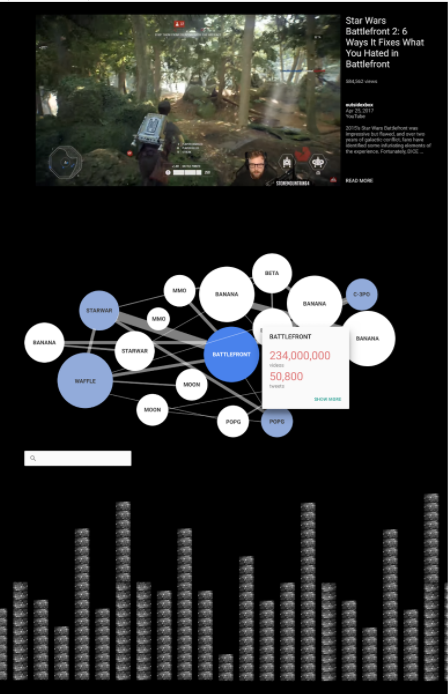

Overall, The papers gave us several features that we could implement in our product. More importantly, it gave us an idea of how videos can be mapped to terms/labels in general. Hence, in the next iteration of our product, we will move forward to building video-term mapping, categorization, and term-term relationship.

On the UI side, we created more mockups based on our client’s feedback and shared them:

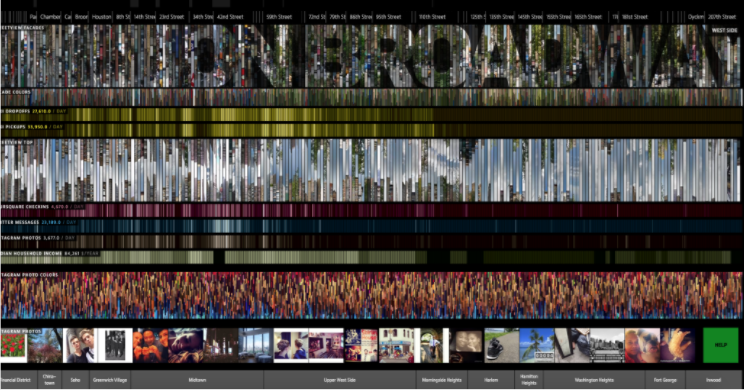

Overall, we received important feedback on the good and bad parts of the three mockups. We also came across http://histography.io/, which will provide us with the main source of inspiration for the next iteration for our UI. The challenge of designing a UI that is interactive, informative and elegant is a huge one for this project.

Next week, we’ll focus on developing our product features and move towards finalizing our UI.