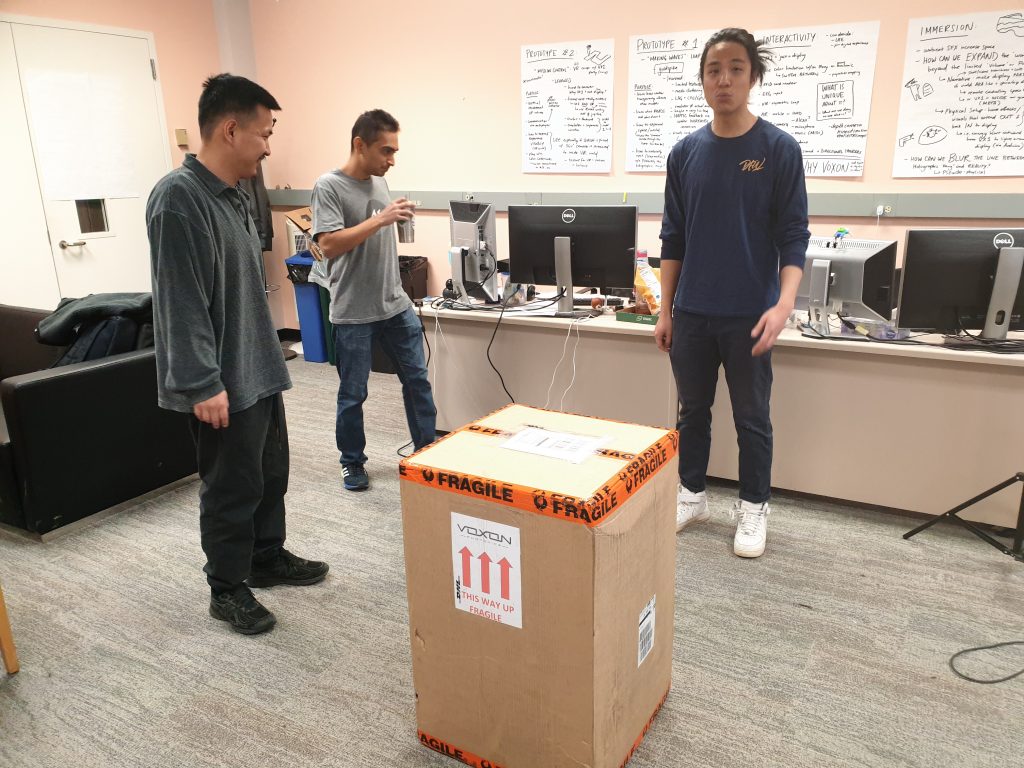

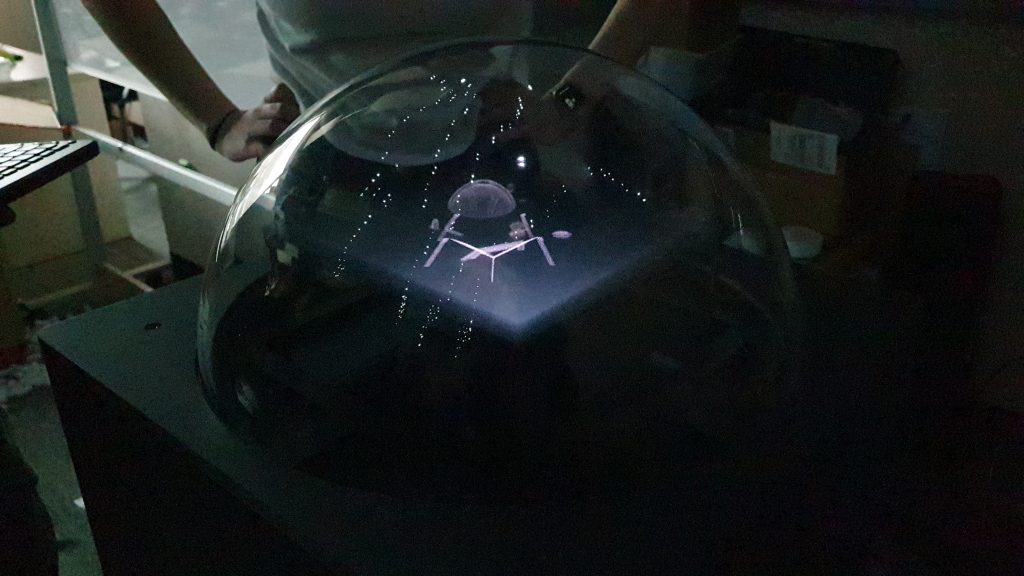

This week we had our softs presentation with the ETC faculty. We created some demo videos and prepared a live demo to show over zoom presentation. We faced a big challenge over how to best showcase the machine over zoom, since it is best appreciated in person where all 3D capabilities can be shown.

We definitely learned a lot from softs, and it is helping us prepare for finals (which will also be over zoom). We shouldn’t try to have the machine speak for itself; as over zoom it can’t do that very well. So, we will have to really list out all of our design learnings and find a way to tie them back to our prototypes that is clear and concise. Definitely easier said than done 🙂