This week was all about mentally and physically preparing ourselves for the tasks to come:

Planning, Planning, Planning

The four of us sat down and addressed the elephant in the room: motion capture integration was coming. Soon we would be running playtests on early drafts of what would be our final build of the game in VR. In essence, it is time to start creating our deliverable.

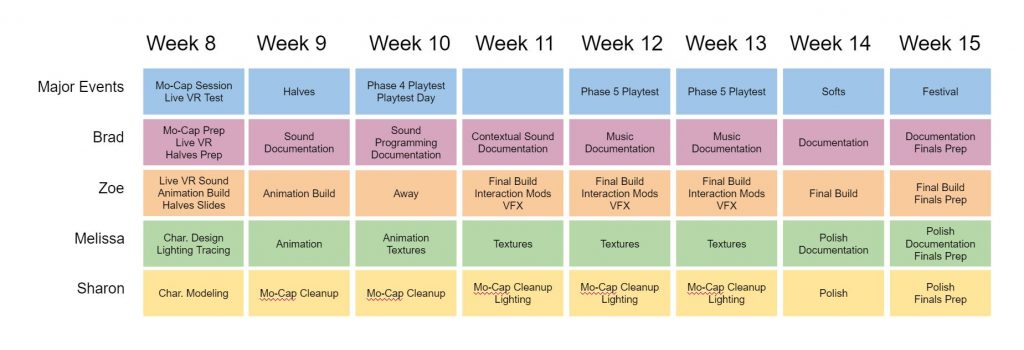

In order to settle our fears, we drew out the semester week by week, and determined what each of us would be doing during that time.

We still have not quite determined how audio (particularly voice over and music) are going to fit into our overall integration, but we have not forgotten them. Much of it simply will come down to getting the data back. In the meantime, we moved into the main focuses of Week 7.

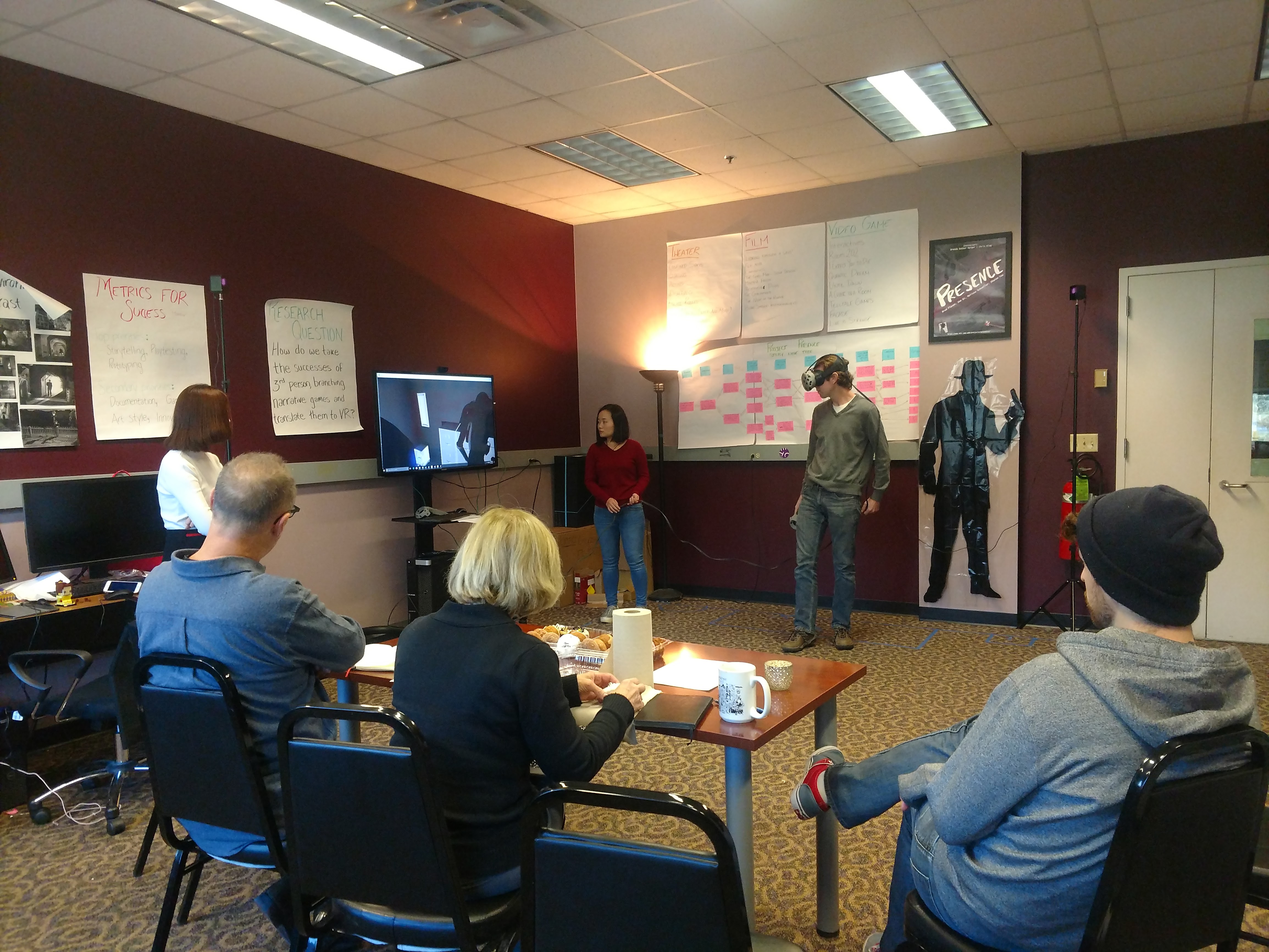

Creating the Live VR Experience

Three things needed to be addressed in this week of VR development:

- Determining the constants and variables of what we will be testing in this and subsequent playtests

- Finding and addressing the technical challenges of actor-puppetted characters picking up and putting down objects they can’t see.

- Designing the artistic effect we want to have in the final build

Constants and Variables

Brad led the team in a meeting on Monday where we reviewed what we are calling “knowns” and “known unknowns.” These are our constants and variables respectively.

Our knowns are:

- 6 objects in the space that the player can interact with to change the story

- The player will not be allowed to interact with any other objects in the environment

- The interactable objects each have two states (eg. the gun is loaded or unloaded)

Our known unknowns:

- How we allow the player to interact with the objects (eg. using the objects as though they were real VS pointing and clicking)

- How we signal aspects of the game to the player (eg. highlight interactable objects, use flashing to indicate a choice locking in)

- How we contextualize the experience (ie. creating a threshold to help the player understand their role)

Technical Challenges

The Vive Trackers opened an interesting playtesting door for us by allowing us to run a game before the character models are even complete. However, since the trackers do not have triggers and do not come with headsets of their own, they poses their own difficulties.

These difficulties have included making sure that the characters don’t accidentally walk through walls, and ensuring that objects can be picked up without the player being able to also mess with them. The walking through walls is solvable – our floor is now covered in tape markings – but the picking up of objects was more complex.

Zoe has been working hard to have each button on a keyboard trigger actions with different object: “K” opens the door, “L” allows Giuli or Luca to pick up the gun, “G” hands off the photograph. This involved plenty of finessing of the alignment of both the objects and the character puppets. While the phone could be picked up at whatever angle worked for the actor, the gun needed to aim along the pointer finger, no matter which way the hand was tilted when the actor reached for it. The result is a surprisingly smooth and immersive experience.

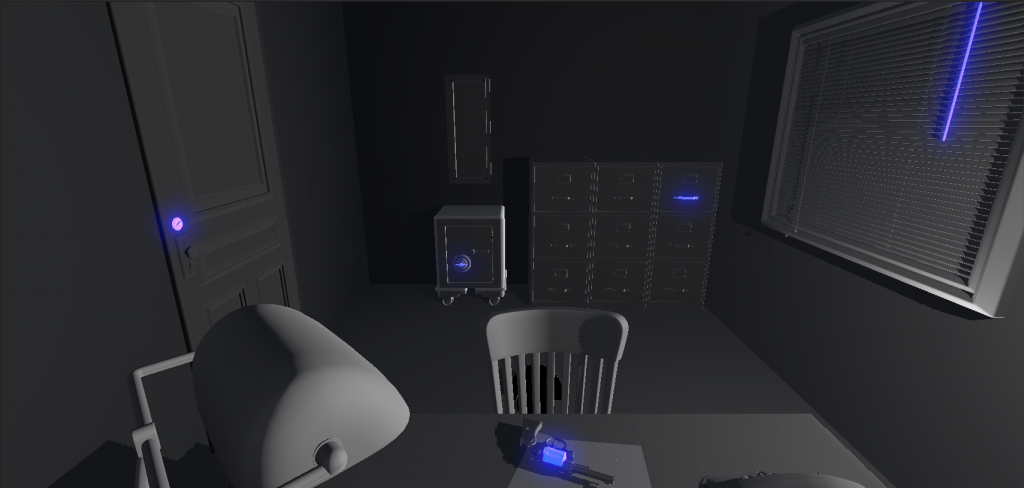

Artistic effect

Sharon was hard at work this week channeling the feeling of Film Noir through lighting. Earlier in the week we had a lighting professor from the Purnell School of Drama and Emmy Award-winning scenic designer Kevin Lee Allen come by to review our set so far and give feedback on our character and environment plans.

One of the things we were struggling with was creating silhouette with the characters. We had decided we wanted to use the shadows for both dramatic effect and as a mask for a lack of facial animations that would be too out of scope. When the experts put on the headsets, they both agreed that for all that they could see more of the face that wasn’t there in the puppet on the left, they far preferred it to the bizarrely flat puppet on the right. And so we had our character direction!

Setting the Stage for Capture

So much of what goes into motion capture is in the work done to ensure the most realistic motion. The actors have the emotional expression part down, but what about everything else? What about getting the right curve of the elbow when opening a door? Or that the character is actually looking out a window, and not at a nearby wall?

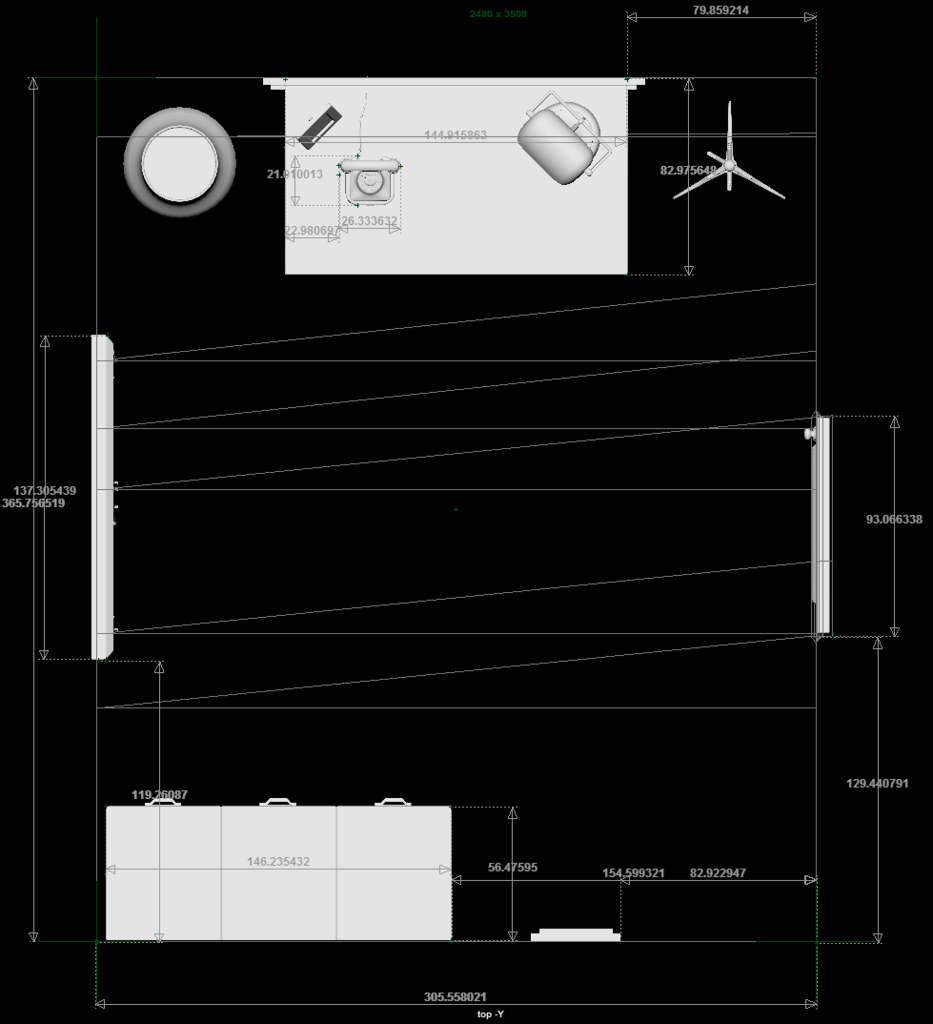

Well, that’s what measuring the environment comes down to!

First we marked in red where all the objects would be and how large they were. Then, after talking with Justin Macey, the research technician for the Carnegie Mellon Robotics Institute, we realized we could insert actual objects into the space without losing fidelity in motion. Armed with that knowledge, we started to acquire a plethora of random office paraphernalia. Brad drove filing cabinets from the ETC, Sharon made a Safe out of a combination of a metal cabinet and a cardboard door, and Justin literally built us a door.

Once all the pieces were in place, we had our final rehearsal before mo-cap. It was important to us that we had one rehearsal where: 1) the actors were rehearsing as though they were actually being recorded, and 2) the rest of the team could watch and provide feedback on certain motions and positions that seem innocuous but could be problematic.

All in all it was an incredibly busy and successful week of preparations, and we can’t wait to see where it all leads!

See you next week!