What a busy week! As Week 13 is really only 1 full day of work before teammates start to leave for Thanksgiving, we are getting as much as we can into our 12th week of production.

Updating the Audio

Given that we are a team of artists and programmers, we needed to seek outside help when it came to creating sound. After all, even though Brad and Zoe have both been sound designers for our rapid iteration course, Building Virtual Worlds, neither felt skilled enough to measure against the rest of our production quality. Therefore, we sought the help of Stephen Murphy for composing our soundtrack, the Vlahakis Recording Studio in the College of Fine Arts for recording Stephen’s band live, and Julian Korzeniowsky for sound integration.

Julian is an ETC student himself, and has worked with Melissa and Sharon in the past on a 360-degree animated film. In fact, he specializes in positional audio, so engineering sound for virtual reality is right up his alley. He met with our team to set up a schedule, and then went straight with Brad to the studio.

Over the course of the week, we spent about 4 hours with each actor, getting the voices of Luca and Giuli respectively into their final forms. After having spent weeks using placeholder audio from the motion capture session, it is incredible how good the studio work sounded. The reverb from motion capture was too big for the small room in which the story takes place, and the actors spent much of their energy prioritizing their body language. When these guys put their all into their vocal delivery, we all felt it!

Once we get back from Thanksgiving, we will go node by node, integrating the sound so that it syncs up with the cleaned up animation.

Testing on a More Targeted Audience

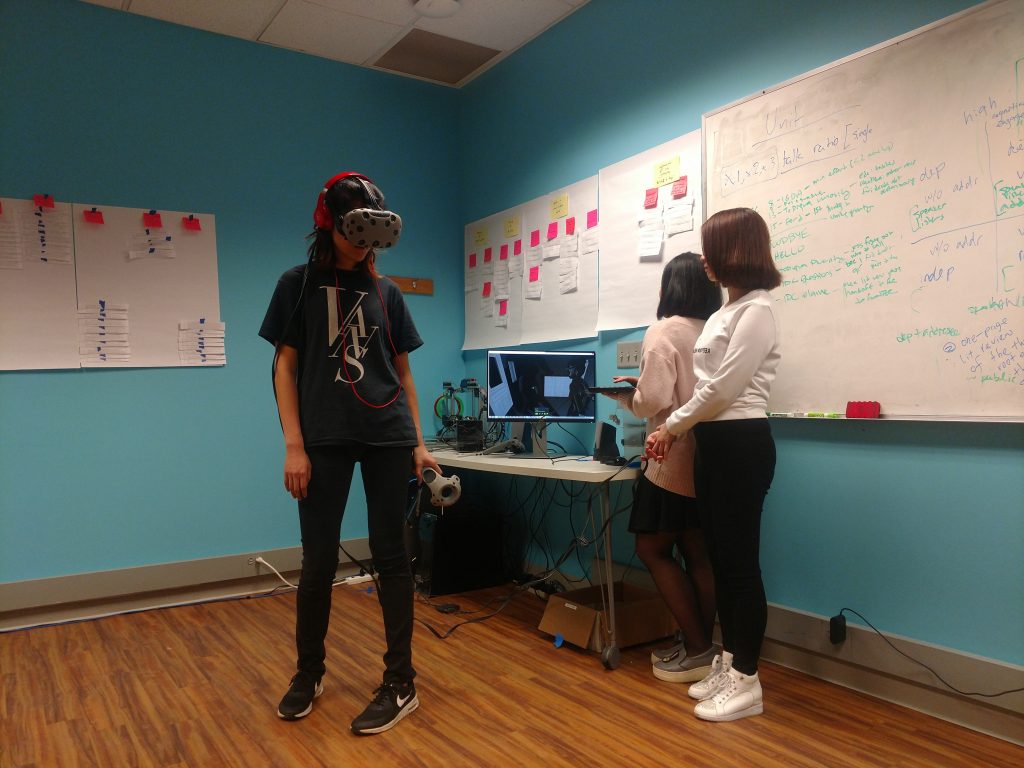

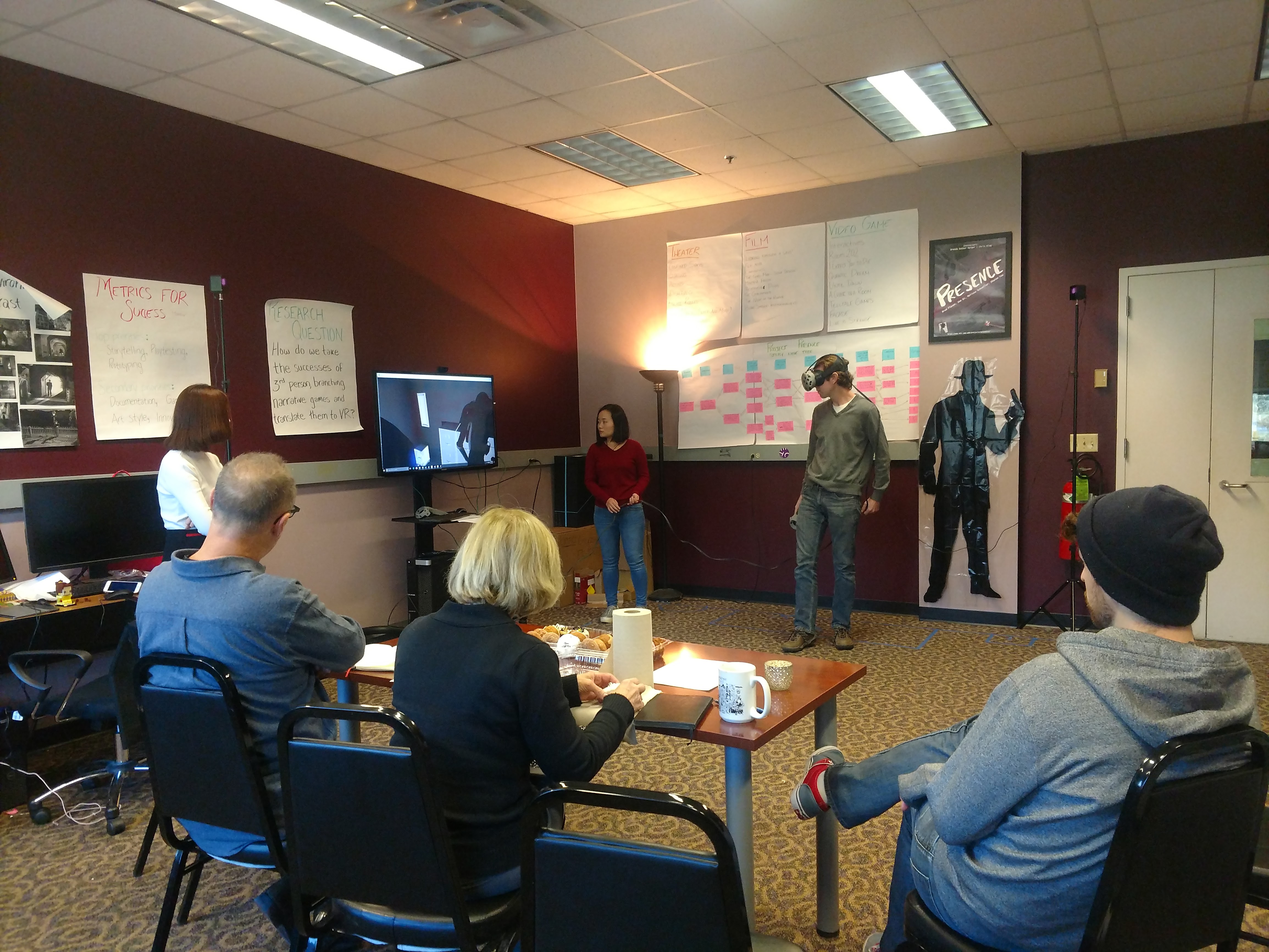

On Friday we FINALLY got the chance to test an audience closer to the one for which we are designing. We have tested on game designers and on completely naive players, but the folks we were going to be getting in the Oh! Lab are students and young adults who enjoy are familiar with video games, but may not necessarily know how they are built.

As a reminder, we are currently conducting tests to determine when the right design for the controller and the interactive objects. This involved us putting together two builds:

- Build A: a point-and-click design where all objects are interactive at once

- Build B: a touch-and-click design where only one object is interactive at a time

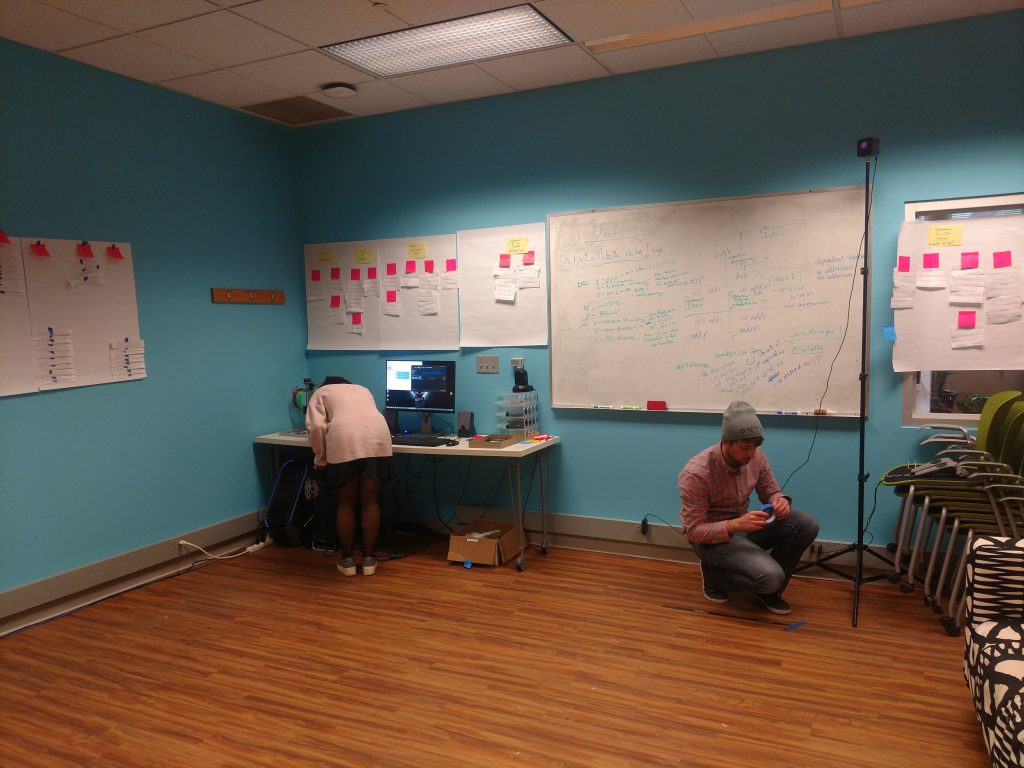

Zoe (left) getting the Oh! Lab computer ready for testing while Brad (right) helps to set up the Vive lighthouses

The Oh! Lab has plenty of space perfect for playing various games. They did not have a Vive, however, so the Presence team packed up all of the gear, loaded it into Brad’s car, and brought it all to main campus. We felt like a visiting band, dragging along all of these large boxes and bits of equipment, as we moved single file through Newell-Simon Hall. We looked a little silly, but with the help of Justin Puglisi, HCI administrative coordinator, we were able to get set up for testing.

We had 8 testers. Some are researchers in the department of Human-Computer Interaction, some students of information systems, and others still from the school of drama. Half of them we put in Build A, and half in Build B. The playthrough took about 15 minutes, and then we had 15 minutes for questions.

What worked

The point and click worked exactly as we hoped. People in Build A felt very comfortable walking around, finding a place to stand, and knowing what to do. While we don’t love the aesthetic of the laser, that mechanic is definitely here to stay.

What didn’t work

Having one object at a time definitely did not work! Not only was it boring to have only one interactive thing available at any given moment, but with only one object highlighted blue, there are not enough examples in the game for the player to learn the interaction rules before the story goes too far along. We even had one Build B player who did not realize the objects were interactive at all, as she only noticed the lock on the door to start the scene, and the gun at the end.

The problem is that having all objects available wasn’t great either. By showing all of our cards up front, it ruined the potential for surprise. Players who got to experience Build A said that they would have preferred to have a little bit of mystery – to turn around and find that something new had become active.

So what’s next?

Next week is Thanksgiving, meaning we get one day with everyone in town. And we say everyone that includes our composer and his band…. They’re in town for soundtrack recording day!

We will also be discussing what we will be presenting at Softs, so the next build is definitely in our sights.