This was a quiet week, and therefore will not be a long post:

What We’re Fixing

With Zoe back from the Unity conference, we were able address what went wrong with the build, and now finally have our first playable version of the game. Until we can get the fully cleaned up animation in, Zoe will continue to use the keyboard to puppet room animations, but otherwise it runs fully independently.

Sharon is in the midst of cleaning up that animation. Because our motion capture studio can only do gross physical capture, we have to animate the more fine physical movements by hand. This includes anything the fingers or wrists do. The room animations are also being timed at this point to match the characters, creating an overall smoother scene.

In the meantime, so that Sharon can eventually get back to fixing up the lighting, Melissa is spending the week focusing on textures. Because the room is modeled to be very photo-realistic and the characters are not, the textures exist to bridge the gap between them. It will also give us a chance to provide clarity between characters, as we add bits to differentiate Giuli and Luca from each other.

Brad is working on getting us better audio. We will talk more about this next week, but we are putting in our best efforts to record both voice actors and the soundtrack before Thanksgiving so we can be done with the audio by the end of Week 14.

What We’re Changing

We discovered what worked in last week’s playtest, as well as what didn’t. Therefore, our next test is implementing some changes to increase clarity and explore idea interaction design.

For starters, as we mentioned before, we changed the design of the cabinet lock to make it clearer when you are locking and unlocking it. Melissa created a quick padlock and loop that we stuck onto the cabinet, and instantly this created clarity for players.

We then had a meeting to discuss how we want to refine player interactions. As of right now, we are grappling with two different questions: how players select the objects (touch-and-click vs. point-and-click) and how many objects they have be interactive at a time (all at once vs. one at a time).

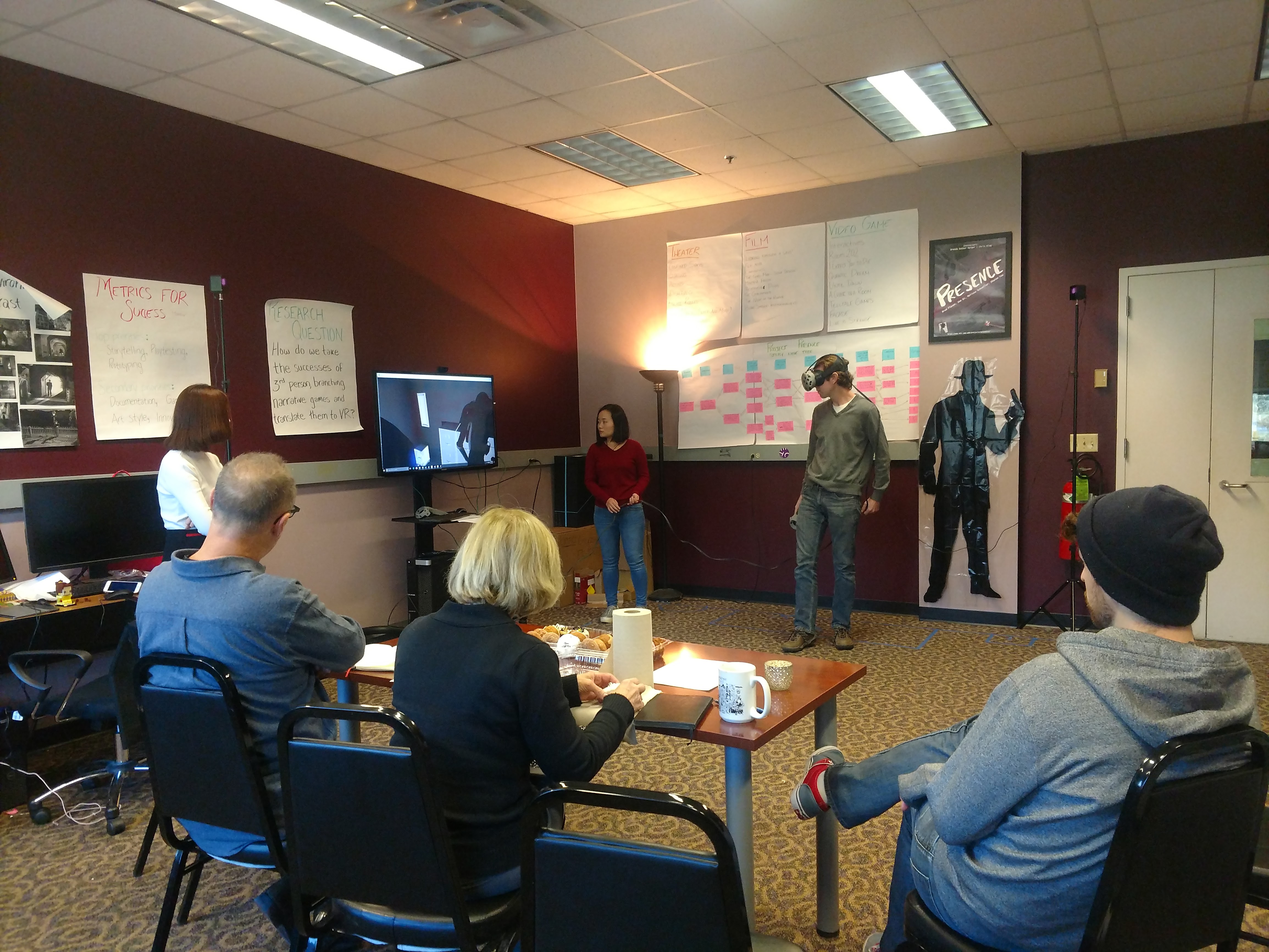

We know what all at once with touch and click looks like, so we are going to make two builds to take to the Oh! Lab to test on main campus: Build #1 will feature all objects interactive at once with a laser point-and-click controller. Build #2 will continue to use touch-and-click and will restrict interactive objects so only one glows at a time.

We’ll let you know next week how that all goes!