<DOCUMENTATION>

- Abstract

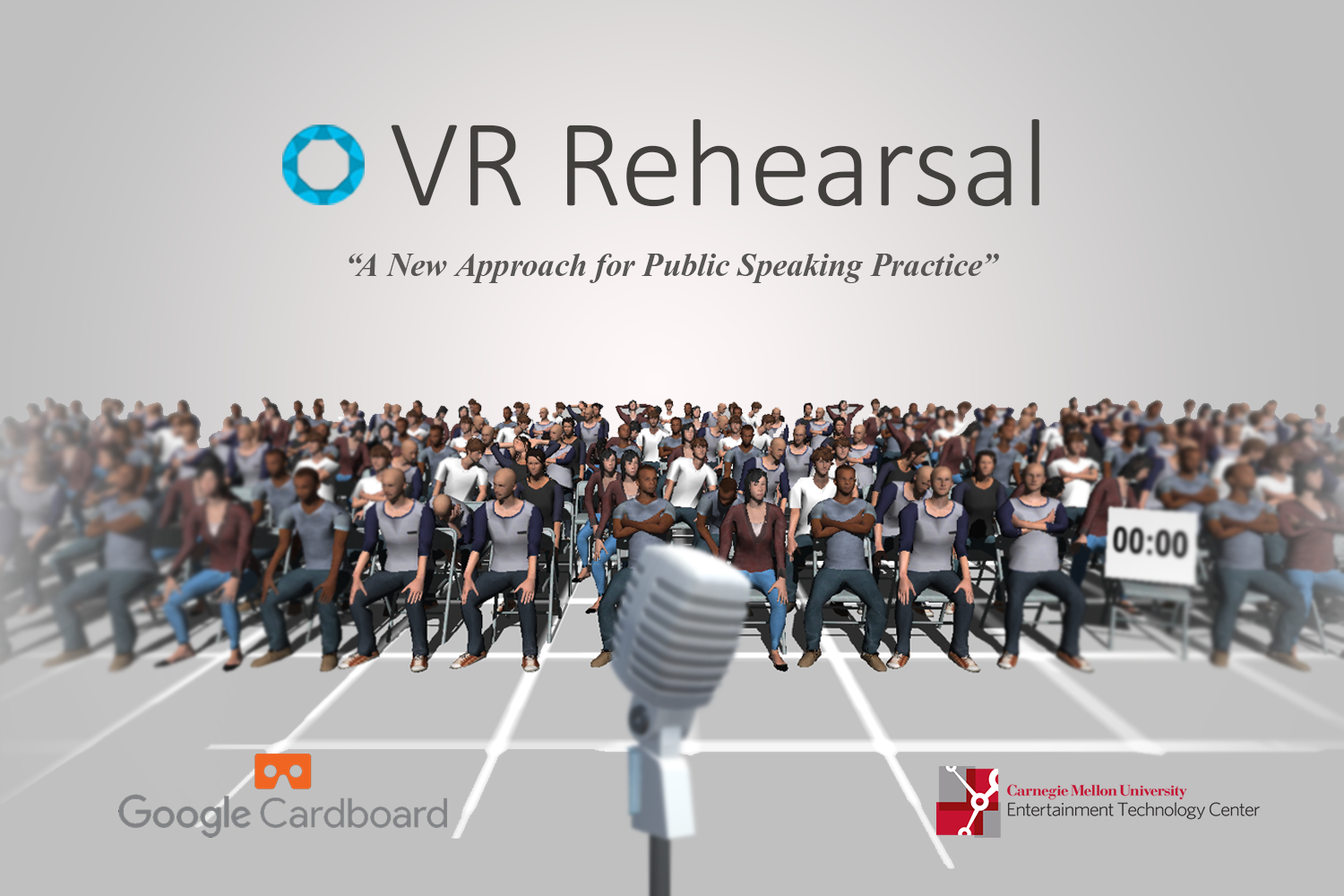

We present VR Rehearsal, a mobile VR application to assist general public to practice presentation in an innovative and convenient way. It is able to create immersive presentation environment as well as provide various types of feedback, both real-time reaction from simulated virtual audience and non real-time statistics visualization. The application assists presenters to self-evaluate and self-improve their skills efficiently.

Keywords: virtual reality, public speaking, transformational game

- 1 Purpose

Public speaking and delivering presentation are familiar challenges for most people, if not everyone. From student to businessman, people frequently face the situations where it is crucial to prepare for the upcoming presentation. Given the importance of well preparation for successful delivery, current methods adopted by presenters remain traditional, such as rehearsing in front of mirror. However, traditional methods are limited in terms of reproducing an immersive environment. Moreover, traditional methods cannot evaluate presenters’ performance quantitatively in aspects of eye contact and speech fluency. To address the above limitations, we introduce VR Rehearsal: a mobile VR application where presenters are able to self-improve presentation skill conveniently with their mobile devices and low-cost Google cardboard. Through the usage of VR Rehearsal, presenters are expected to improve themselves on the following aspects which we believe are the keys of successful presentation: proper loudness, eye contact, clear pronunciation, good time management and fluency.

- 2 Approach

VR Rehearsal provides an interactive virtual presentation environment which is open to customization to fit the presenters practice need. Before practice, the presenter is able to configure a practice session on PC via network, including uploading slides, selecting various types of environment (e.g. small classroom or large auditorium) and audience (e.g. students in casual wears and businessmen in suit and ties). When the VR Rehearsal application is launched, it would synchronize the customization and be ready to use.

VR Rehearsal intends to provide the presenter immersive environment with visual fidelity and useful real-time feedback. To achieve the two above goals, 3D crowd is generated and simulated so that they react based the presenters performance. Two major types of inputs from the presenters are tracked: head motion input for computing the approximated looking direction, and speech input for analyzing the presenters volume and fluency. In particular, speech fluency is evaluated by detecting self-interruption points and distinguished them from normal gaps between words. An agent-based, discrete simulation model takes the following input into account to generate audience behavior in a state-based manner. In each simulation step, the weights of all states would be computed before the final behavior is sampled and blended from the states. To efficiently generate crowd models suitable for mobile platforms, we combined existed character generation tool and level-of-detail generation tool. Finally, character animations are generated by motion capture.

VR Rehearsal is also able to generate post-session feedback after each session to assist the presenter to better self-observe the performance. A spherical gaze “heat map” where each pixel indicates the “frequency” of user looking at each direction would be generated to visualize the presenters eye contact pattern. A speech performance graph would also be generated to demonstrate the presenters speech volume and fluency. By combining both real-time feedback from audience simulation and visualized post-session feedback, the presenter is expected to assess the performance easily, which leads

to efficient self-improvement.