Prototype 3 - Chemistry

Objective

A collaborative experience where students can create a model of a molecule. The students can collaborate without being physically located in the same space.

Platform

Oculus Rift or HTC Vive + Zed mini + Leap Motion

Development Process

For this demo, we did not expect to have much time left after development for testing with students and teachers. It was safe to assume that networking the headset rigs and syncing the model data between them would not be a straightforward task, so we devoted a lot of time to MVP prototyping, and significant content research so that we could make more informed decisions on our first attempt.

Design Research

We consulted with the Eberly Center at CMU and spoke to Dr. Timothy Nokes-Malach at the University of Pittsburgh, who helped us find problems in the classroom that we might be able to solve using this technology. We also spoke to Kenneth Holstein, who is a PhD candidate at CMU’s HCII, who shared his thoughts based on his work in the use of AR in classrooms.

Some recurring themes in these conversations were:

- Don’t try to use technology to solve what is essentially a human problem, but you can use technology to facilitate it. This was in reference to our earlier ideas of integrating AR into classroom debates or group activities that have a stronger conversational component. This also refers to what the technology tries to do – teachers don’t like it when the technology might try to solve a problem that is best left to them, but they would like the technology to notify them that the problem exists.

- Remotely located students have an inferior classroom experience. This seemed like a problem AR could potentially solve, given that the student could feel a greater sense of presence if they were working on virtual objects in collaboration with a classmate.

We then locked in our content matter in chemistry, specifically around the geometry of molecules, and proceeded to develop the demo. For the chemistry-specific research, we heavily referenced Martina Rau’s work when defining the features and scope of the prototype.

Early Design

Learners will create a virtual ball-and-stick model collaboratively. The final structures will be checked and the errors will be marked to them, which they can discuss to further modify their models.

The students can collaboratively build the model of the molecule, as they do with the physical ball-and-stick kit. They can observe the model from all angles and make changes as per their discussions with each other.

Learner-learner interactions

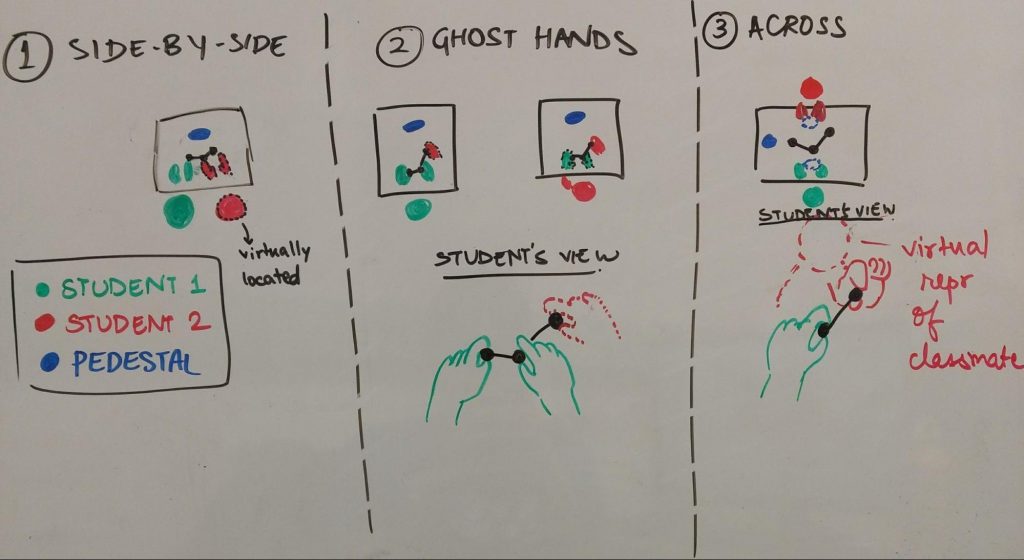

How are the students oriented in space when they are performing these tasks? We laid out some ideas with our expectations of pros and cons.

- Side-by-side

(+) Having the students seated adjacently in a virtual space is truest to the configuration in the labs. Students sit next to each other, each at their own computer, and collaborate with the model placed in the center.

(-) Might feel awkward, given the existing desk space they might be working with. The physical desk might end abruptly in the middle of the virtual workspace.

- Ghost hands

Students can see their partner’s movements in the space as hands working over the model.

(+) Might be good for a learner-teacher interaction, where the teachers is modifying / correcting student work.

(-) Might feel intrusive.

- Across

Students are seated across from each other, and can see a virtual representation of their classmate in front of them. They can collaborate on creating the model.

(+) It would be valuable to observe how interacting with a virtual avatar affects learning gains.

(-) The orientation of the molecule is fairly important, pedagogically. In this setup, the students would not be able to view and discuss the same orientation due to their seating.

We decided to proceed with the students situated side-by-side for the scope of this prototype. As we added more players, we situated them around our table to try both side-by-side and across orientations.

Early MVP Prototype

We partly began development on this demo by first building an MVP networking prototype where two users can pass a virtual object to one another while they are located in the same space.

We used simple top hat models as a placeholder for remote user avatars. The interactions in this demo felt viscerally promising, and we proceeded to implement a more fleshed out, chemistry-based demo.

Final Prototype

The final prototype features a space where users are located using image markers, and can spawn atoms and combine them into molecules. It was important to get the structure of not just the final molecule right, but also the intermediate stages, so that the students could visually understand how the forces between the atoms cause the molecules to acquire a specific shape.

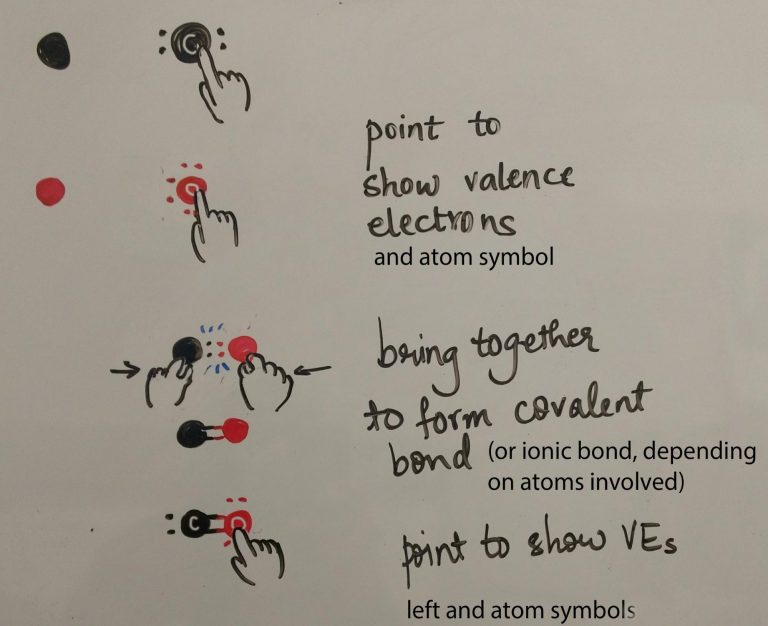

The atoms are represented with their valence electrons hovering outside the shell, so that it is clear how many bonds can be formed. For our prototype, we chose to allow the creation of simple molecules like water, carbon dioxide and methane. Water and carbon dioxide are a good place to start, as they both consist of three atoms, but exhibit different molecular structures due to the valence electron repulsion.

The image markers also allow remote users to join the experience, simply by looking at a specific marker, which determines how they are located in the space. The remote users have an avatar to represent them, and their hands are visible to all users.

Initially, we had all the atoms available in the space for users to access, grouped by element. This was predictably not an ideal setup, and tempts users to immediately start building things on their own (or worse, toss atoms around). In our next stage of polish, we added an inventory system, which users can access by flipping their palm upwards. Once the atom is dragged out of the palm panel, users can communicate with each other to combine them into molecules, or put it back in the panel if they don’t need it.

Once a molecule is correctly created, a tooltip appears to show the name and chemical formula of the molecule.

Results

The interactive and hands-on nature of the prototype was a success with most testers. The note of caution was that it is so engaging to begin playing with the atoms, that a teacher in the experience needs a strong way to control student interactions.

Positive feedback for the high-five interactions in the form of shining particle effects adds a nice touch to what is essentially a collaborative task. The ergonomics of such a task shouldn’t be too taxing, given that the current lab work also relies on intervals of building and then writing / analyzing. However, ergonomics are in general an important factor to keep in mind when designing tasks that require extended physical interaction with virtual objects.

Iterating on the size of the atoms was important until they were comfortable for most people to grasp freely. The hydrogen atoms are smaller than the oxygen and carbon atoms, and many users have been observed to default to pinching the hydrogen atom and grabbing the oxygen and carbon. This was an interesting echo of what we found with our earlier line prototype.

We initially considered showing a more accurate representation of valence electrons, with tiny electrons revolving randomly around the atoms, but this proved to be too distracting. It was important for students to know the number of valence electrons on each atom. The electrons were changed to small spheres. However, the spheres aren’t fixed in place, and retain some electron-like movement that can be observed when an atom is dragged and moved. This middle ground solution worked well to simulate the feel for electrons while also conveying the information students need to know.

One of our last additions was switching to an inventory system, so that the users had some control over how many atoms were on the scene at once. This might be a better use of the palm flip menu as compared to our earlier effort in the 2D Geometry demo. It also leads to a less cluttered space and more control for the user.

We also iterated on the visuals of bond formation a few times. The bond line gets thicker and solidifies as the atoms move closer, lending a very authentic feel to the interaction. It also needed to be made clear that both atoms need to be grabbed for a bond to form between them. The atoms have a gold edge when grabbed, and the gold bond between two atoms is solidified when two atoms are brought close together. In our early prototype where a number of atoms were pre-spawned, we found that users would grab an atom and physically move it closer to another atom to create a bond. The idea that both atoms need to be grabbed had to be conveyed verbally. This problem is hopefully alleviated slightly with the users deliberately spawning the atoms they wish to bond – any new atom is already being grabbed when initialized. However, there might still be a need for indicating to the user that both atoms need to be grabber, perhaps through some sort of dynamic feedback.

Given the standard saturated colors for the atoms, it was important for us to incorporate a rim shader on the edges of the spheres to ensure visibility. When the atoms are occluded by physical objects, there is another shader that renders them in a grayscale outline. This was a big win in terms of helping the user locate the virtual objects. Not rendering virtual objects that were hidden behind physical ones might have led to confusion and frustration, which was avoided by adding the shader.

The virtual avatars of remote users have a significant presence in the physical world, evidenced by users feeling uncomfortable if the avatars were invading their personal space. Thus, it is even more important to make sure players spawn in the correct location, to avoid discomfort.

We did not have the chance to test out the prototype with school students, so there is not a lot of pedagogical impact that we could gauge. However, the goal of allowing for a more interactive remote learning experience was successfully accomplished, with scope for adding on a more scripted collaborative task for learning.

Future Work

A major hurdle we tackled with this prototype was a technical one. There are many design questions yet to be explored with regards to incorporating a teacher into the experience, as well as the different student interactions that are possible.

Learner-Teacher Interactions

- Teacher annotating on student view

The ability for a teacher to annotate the student’s interactive model in some form could potentially be powerful. However, the issue of the teacher’s physical presence in this comes into question. If the teacher is represented as a pair of floating hands that can descend into the student’s work, does that feel too intrusive or distracting? We have already found that the virtual avatars feel just as present in the physical space based on the discomfort of users if the avatars are located too close.

- Teacher setting task milestones and viewing progress

Most of the experts we spoke to agreed that the ability for a teacher to set milestones for the task being performed, and assess student progress based on those milestones, is an important addition. They added that expert teachers might be able to help students based on a cursory look at the milestone data, but novice teachers may need to drill down into the data more to better understand where the students need help. Devising a system to display this information is important, and some of it may be by using tablets or laptop interfaces.

Related: Project Lumilo

- Collaboration script incorporated into experience

Martina Rau’s research finds that teacher assent helps student build concept-representation connection. This means that the teacher’s prompts for a student to explain a concept helped the student build a better connection between the concept and its virtual representation. Helping the teacher to prompt the student at the right time using collaboration scripts integrated into the AR experience could be one way of achieving this. In addition, teacher intervention was a smaller factor in increasing student understanding when the experience was more scaffolded. In essence, if the technology can be used to prompt the students in approximately the same way that a teacher might, it could show positive results.

- Teacher voice interactions

This is a relatively unexplored area, but if teachers can augment their abilities in the classroom through a voice interface, it has the potential to be powerful.

Learner-Content Interactions

- Error detection and notification for students

It is clearly important in the learning process for students to make mistakes, unlearn and relearn concepts. Thus, any interactive experience dealing with a task-based learning format needs to incorporate appropriate feedback loops, either through teacher intervention or automatic error detection.

- Viewing the physical occurence and properties of the compound once you create the molecule

Similar to the pedestal in the Biomes demo, it might be compelling to have a space where the user can place their finished molecule and view more information about it. For example, it is easy for students to imagine what water looks like when learning about water molecules, but not so much for other compounds that they discover as they keep learning. A space that shows the physical occurence of the compounds, as well as properties like melting point, boiling point etc. could be a useful tool.

- Switching between representations of the same molecule

The strength of a virtual molecule could be used to switch between different representations of a molecule to understand different properties. For example, the ball and stick model conveys the bond angles and lengths, while an electrostatic potential map conveys the sizes of individual atoms accurately. However, too much context switching might be ill-advised for students who have just begun learning the concepts.