Game Design:

Maria:

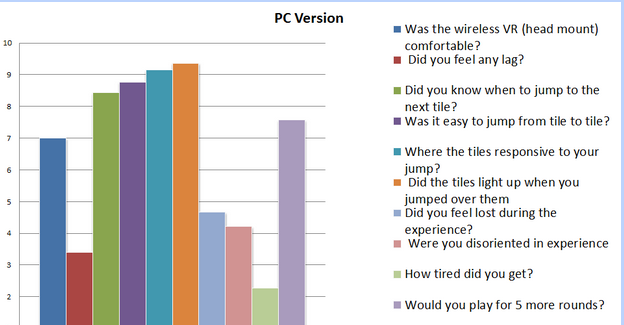

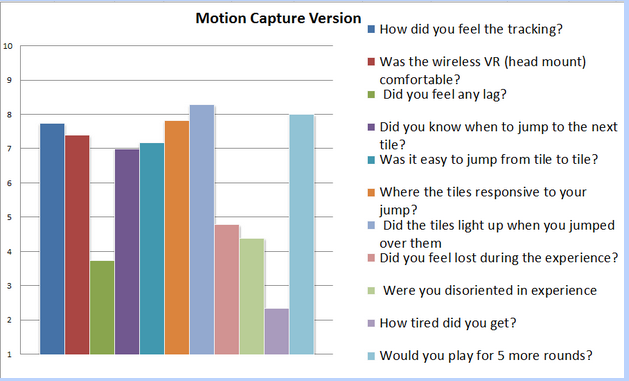

This week we revised the information gathered on saturdays playtest. Apart from the technical difficulties we faced during playtest, we were able to recover by doing a PC version. The charts indicate both version. We had good results over all. People really liked moving around and listening to the beat. We iterated on what we learned and found and scheduled another playtest for friday.

On fridays playtest we were focusing on three indirect control mechanics to get the player so successfully move around the piano. I researched and concluded the design of these three mechanics. The description of the three methods can be found here:

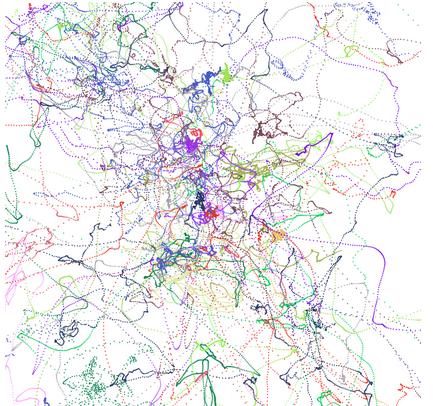

I also created the playtest survey for Friday’s test and this is just the hypermap result of the peoples movements.

Game Produce:

Joseph:

This week, I developed the pattern builder for our prototype, and change the policy of where to generate next beat. I’ve set up a sprint schedule for the team, and here is the schedule:

3/29 – 4/4 Sprint 2: Level 1- Level 3 complete

- Recording Merge into project

- Music analysis and beat system should be done

- Indirect control done

- Background draft

4/5 – 4/11 Sprint 3: Level 4, Level 5 complete

- Light effects done

- all levels done

- background / environment done

- Start recording for the 30 seconds and 3 minutes promo video

4/12 – 4/18 Sprint 4: 30 seconds Promo done, website update done

- Bug fixed

- Set up game start, game end scenes

- scoring system

- output to Gear VR

Programming:

Fan:

This week, I helped the team built the first prototype for our final project. Finished a little tool for beat generator and reader. And also working on rewriting the wrapper. Next I will focus on the recording part.

Kanglei:

This week I helped the team implement three methods of indirect control, despite the first one is deprecated due to the reason that the effect is not obvious, we tested the other two in the playtest.

I also created dynamic weather, but the PC in the motionCapture room right now is too old to perform dynamic weather, hope could use it later.

Michael:

This week michael was focused on two things that helped the experience of Pinacia.

- Orientation Drift Correction

Oculus DK1 always have the drifting problem when oculus is active long enough. It is a huge problem when physical-virtual mapping is used, which is just the project’s case. First Michael tried to use data from motion capture to do the correction. But mocap’s head tracking needs face capture. After some simple test, it’s inaccurate. So Michael then try do the magnetic calibration since the magnetic field in motion capture room is quite stable. The result hasn’t been tested yet.

- Partial Body Visualization

Playtesters complained about the view penetrating into the body, which was a terrible experience. Michael first tried a gradually transparent shader to make body near to camera transparent. However it doesn’t completely solve the penetrating problem. Although the result looks good, weird image still exists in some situation caused by back culling. Then Michael adopt the partial body visualization idea which is simple and straightforward. Arm and foot only look strange at first glance but works pretty well.

Art:

Jiaqi:

This week I developed the concept art and built the castle model. And helped the team to pick the music for our game.