Welcome back to our development blog. This week is a very productive week for the team as we got the green light to move forward with our idea discussed in last week’s blog. If you missed it, below is a short description of what we will be creating for the rest of the semester.

Kaleidoscope is creating an interactive experience for the Carnegie Mellon University’s Askwith Kenner Global Languages and Cultures room located in the Tepper Quadrangle. This interactive experience explores the concept of cultural awareness and competency through the use of real-time depth capturing and data visualization techniques. Guests infer another person’s identity through a progressive series of abstract traits and attributes, and, in the end, are then presented with a visualized contrast between their assumptions and reality. Through this experience we hope to encourage reflection about how culture shapes our perceptions of identity, and how these perceptions may not be entirely representative of the truth.

Since we got our concept approved later than expected, our team jump right into production and had our very first playtest with paper prototype (More on this below).

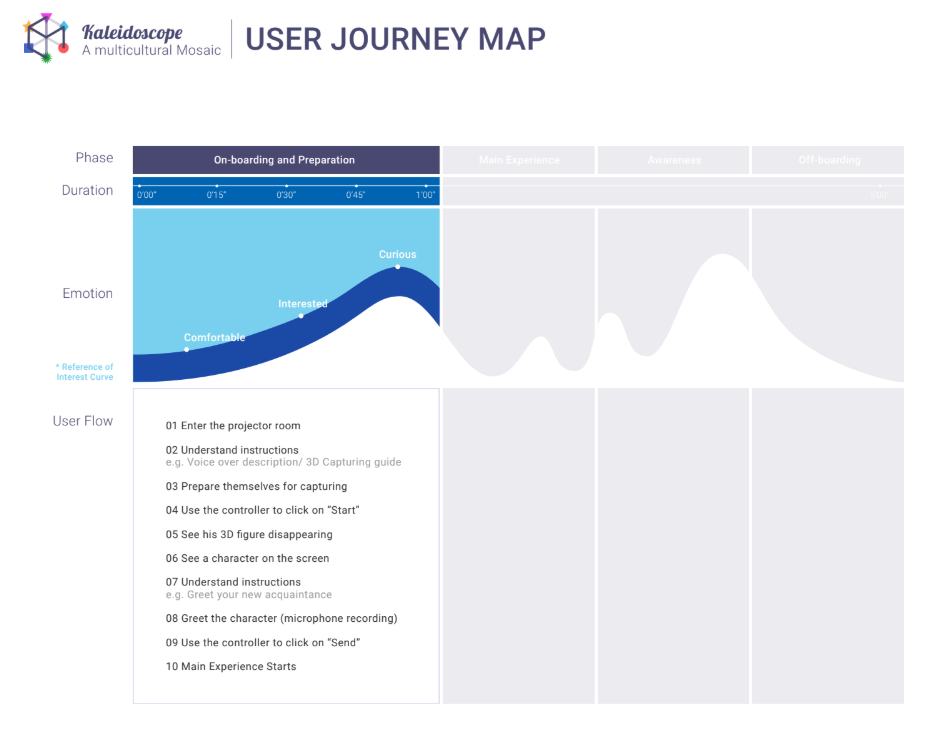

Based on the vision, our designer created this user journey map which we referenced during our first playtest.

Our designer has shared some of her thoughts on the current design challenges she faced:

- How to balance the amount of discomfort but still allow a smooth user flow to match the above stated interest curve

- How to generate the question list for the main interaction?

In terms of our technical progress, we are using an Intel RealSense D435 camera to volumetrically capture our guests as they record their greeting in the experience. Intel provides an SDK that allows us to use the camera in a Unity project but does not have an API for previewing, recording, and playing point clouds on command. The default behavior for the RealSense in Unity is that it will use the provided configuration settings and lock them in when the camera is enabled. So this week we implemented a controller script for the RealSense to be able to change these settings mid-experience. The script switches camera modes by setting the configuration to the desired mode (live view, record, or playback) and disable/re-enable the camera to set them.

We also began integrating the various tech parts of the experience into one scene to begin building the pipeline for the overall experience. Next week we will be working on a manager script to trigger the various events and pieces of the experience so that we can have a full runthrough that we can playtest by the end of the week.

Playtest

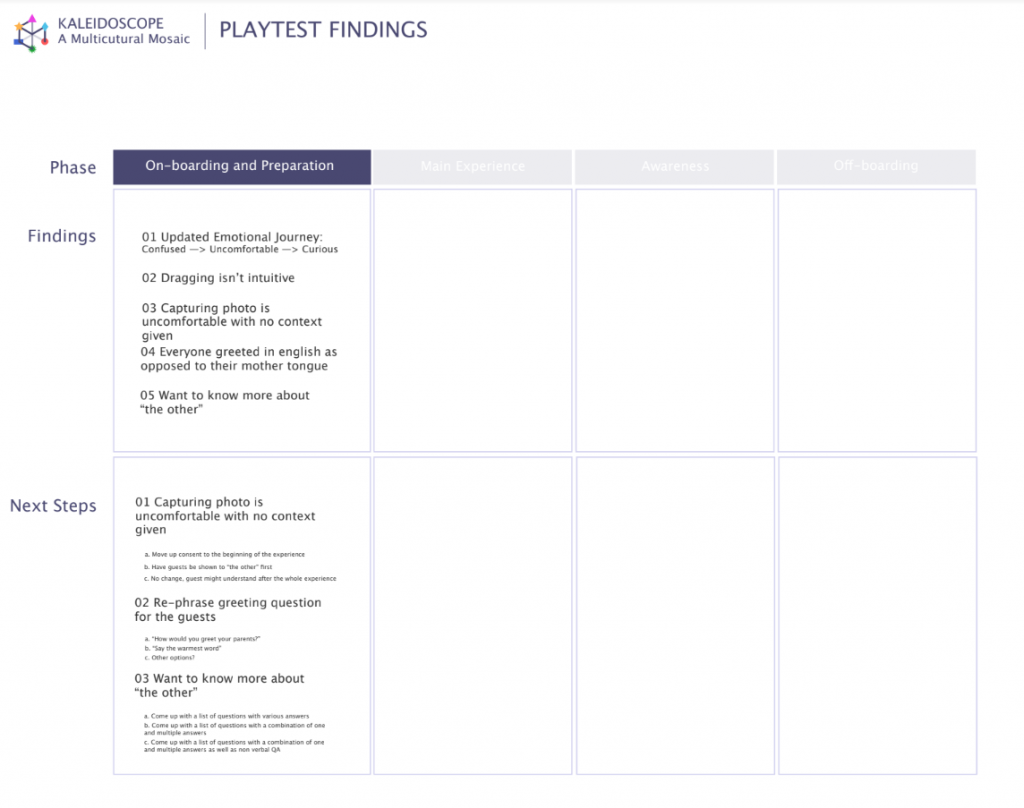

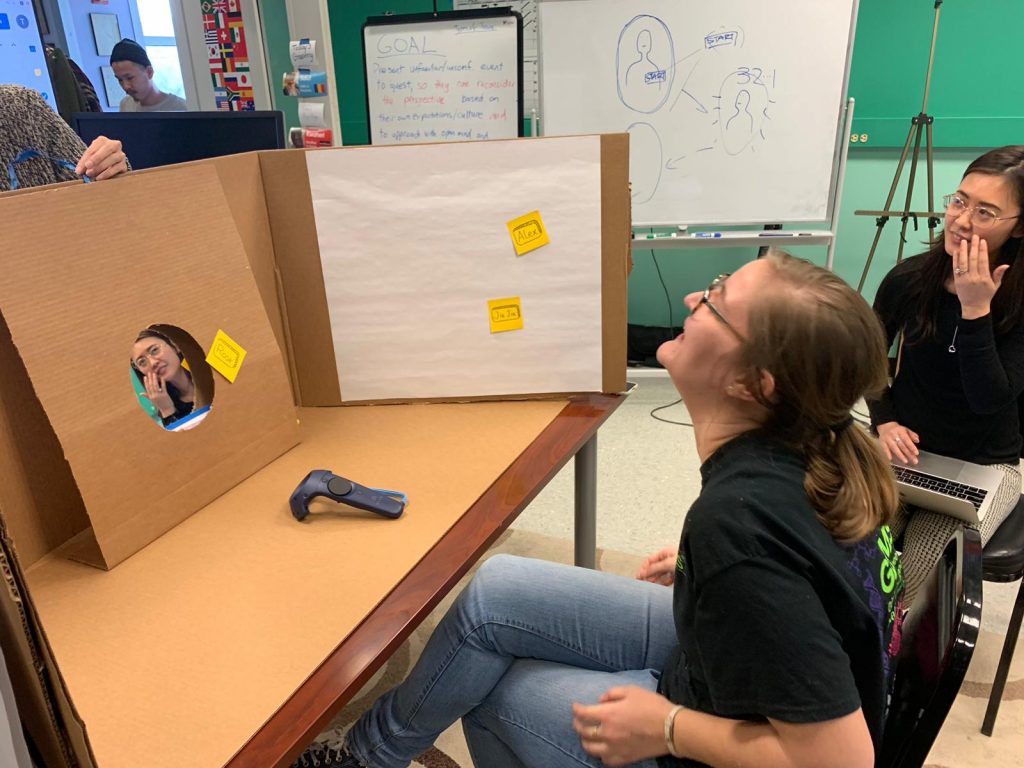

As mentioned previously, we have our very first paper prototype playtest this week as well (YAY!). The team created a cave-like structure using cardboard to playtest the on-boarding experience. We have discovered the below findings and the appropriate next steps to ensure the experience follows the emotional curve that we wanted to achieve

As we’re getting closer to mid-semester, we are going full speed and will be conducting our second playtest next week! Stay tuned!

Thank you for reading,

Kaleidoscope