It is only two and a half weeks left before the Soft Opening. We felt the tension as the deadline getting closer and closer and we had to choose what layers to keep and what layers to keep developing this week. We didn’t have time to change our plan before soft opening anymore.

To recap the feedback we got during our last client meeting, our clients think that the game mechanism of jumping should not take too much time and effort. They suggested that we should push ourselves to another level of RL agent interactions. We should keep the good part of the current layer, get rid of the disturbing ones, and develop more interesting interactions between RL agents.

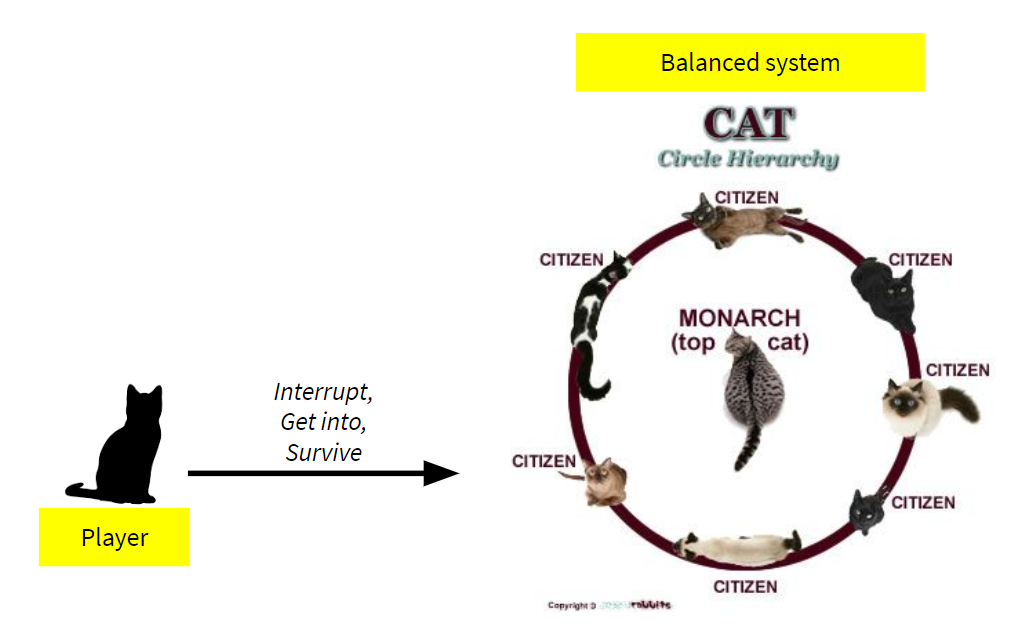

Because of the time constraint, we began our last design brainstorming inside the team. Our team came up with two ideas. The first idea is inspired by the real-life cat’s social hierarchy. Cats choose food according to their power rank in their community. The player is a newcomer cat and is trying to survive, understand the rules, and disrupt the power dynamics.

In this idea, all the cats, except the player, are all RL agents. We try to avoid the frustrations and limitations that are caused by the pathfinding behavior in the previous hippo demo. We found out that even though sometimes the hippo has made a decision of where to go, the hippo has trouble to reach to its destination because of pathfinding. We tried to find a way to show the decisions that RL agents have made more clearly. This Cat Hierarchy game could solve this struggle.

Additionally, clients also mentioned that the interactions between RL agents need to add more complexity. We came up with this new game theme, that in a happy little farm, the sheep are trying to escape from the fence to eat apples and the dog shepherd is trying to keep the sheep inside the fence.

After sharing these game ideas within the group and with the faculty, because we only have two weeks left before the soft opening and it take s along time to tune the parameters and train the RL agents, we decided to compromise our ideas into our current hippo demo.

Firstly, we all agree that there are two goals we would like to achieve in our final product:

- We’d like to see more about how the agents’ decision-making process is influenced by other agents and players.

- RL agents can learn to behave unexpectedly yet naturally.

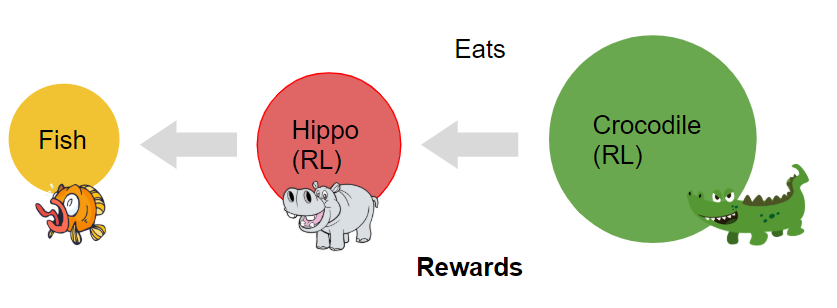

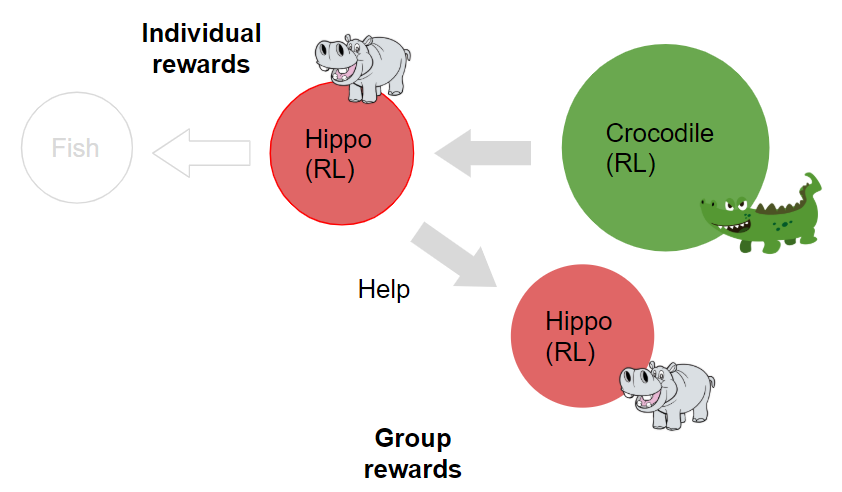

We reverted back to the previous layer that hippos only eat one food. With only one kind of food, players can observe the hippo’s behavior more clearly. Then, we add one more RL agent, the Crocodile, to eat the hippo.

So now the hippo will get rewards by eating the fish as well as trying to avoid the crocodile to prevent punishment. Hippos need to decide what they need to do next according to their rewards and punishments.

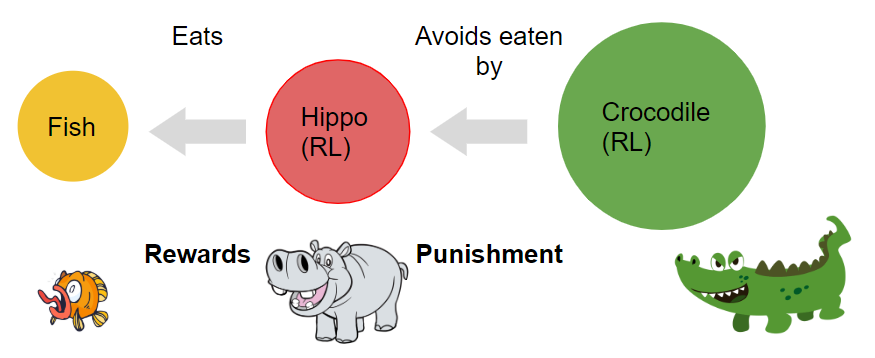

Besides adding another RL agent, we also want hippos to have multiple rewards that will trigger interesting and unexpected behaviors between hippos. We set that hippo allies will have time to save the bitten hippo before the crocodile catches it and eats it.

The decision point is that when the individual reward is smaller than the group reward, the hippo allies will choose to save the hippo in danger. At the same time, if the bitten hippo does not get helped, the hippo will receive a bigger punishment after a certain period of a struggle time.

We also will add UI elements that the player could choose the map at the beginning as well as interact with different objects, including rocks (as the obstacles), hippos and fishes.

After finalizing all the design elements inside the scene, our programmers have finished implementing the main system and began re-training the agents. We have certainly found a lot of interesting behaviors in each iteration and we cannot wait to share with you next time!